The scientific method is a practical way to learn about the world by testing ideas against evidence. It is not a magic recipe, and it is not reserved for laboratories. It is a set of habits—clear questions, careful measurements, and honest checks—that helps separate what seems true from what holds up when reality pushes back.

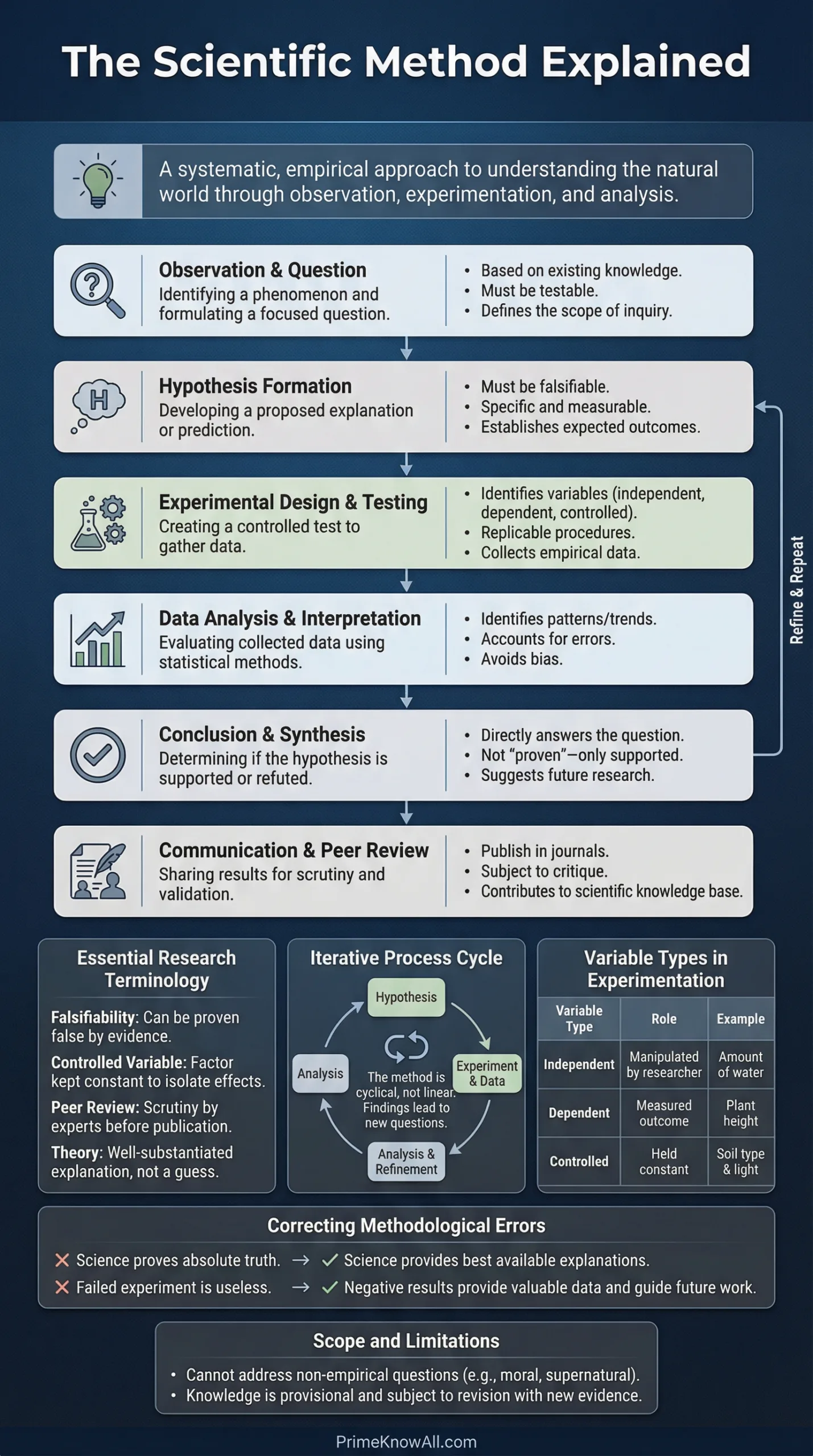

People often picture science as a neat line of steps, but real research loops and adapts. Still, a step-by-step map is useful because it shows what should happen at each stage: how a question becomes a test, how a test becomes data, and how data becomes a claim you can defend.

What The Scientific Method Really Is

The scientific method works as a feedback loop between ideas and observations. An idea suggests what should happen. Observations reveal what actually happened. When the two clash, the method forces a choice: revise the idea, improve the test, or gather better evidence.

This is why science is powerful in engineering, medicine, astronomy, and everyday problem-solving. The method does not guarantee perfect answers, but it does create reliable pressure toward answers that match the world.

Step-By-Step: From Question To Reliable Answer

Below is a clear sequence that works for many investigations. Each step has a goal and a typical output. Skipping steps usually means you still do them later—only with more confusion and less control.

- Observe something specific and measurable, not just a vague impression. Capture details with notes, photos, logs, or sensor readings.

- Ask a question that can be answered with evidence. Good questions define what to measure and what “different” would mean.

- Do background research to learn what is already known. The goal is not to copy answers, but to avoid reinventing mistakes and to refine definitions and variables.

- Build a hypothesis: a testable explanation. A strong hypothesis connects cause and effect and implies a checkable prediction.

- Turn it into predictions in “if-then” form. Predictions are where a hypothesis becomes risky in a good way—reality can prove it wrong.

- Design a test (experiment, study, survey, simulation, or observation plan). Define variables, controls, sample size, and how you will reduce bias.

- Collect data with consistent procedures. Record conditions, calibration, time stamps, and anything that could affect results. Messy data is often a sign of messy methods.

- Analyze using appropriate statistics and visuals. Look for patterns, uncertainty, and alternative explanations. Treat outliers carefully, not conveniently.

- Interpret what the results mean for the hypothesis. A test rarely “proves” a hypothesis; it either supports, contradicts, or leaves it uncertain.

- Share and repeat. Write methods clearly, publish, present, or document. Replication and peer review are how scientific claims earn trust beyond one team.

A useful rule: the scientific method is less about being “right” and more about being checkable. If a claim cannot be tested or could never be wrong, it cannot be improved by evidence.

Key Pieces You Should Name Clearly

Many investigations fail because the parts are fuzzy. Clear naming makes your test repeatable and your conclusions defensible.

- Independent variable: what you change (or what differs naturally in a study). Example: light intensity.

- Dependent variable: what you measure as an outcome. Example: plant growth in centimeters per week.

- Controls: conditions you keep consistent so results are interpretable. Example: same soil, water schedule, and pot size.

- Control group: a baseline condition for comparison. Example: plants kept at a standard light level.

- Confounders: hidden factors that can mimic cause-and-effect. Example: a warmer lamp may change temperature, not just light.

Hypothesis Vs Theory Vs Law

A hypothesis is a testable explanation for a specific situation. A theory is a broad, well-supported explanation that accounts for many results and keeps surviving tough tests. A law often describes a consistent pattern (frequently in mathematical form) without necessarily explaining why. These words have precise meanings in science, even if everyday speech uses them loosely.

Common Pitfalls And How To Avoid Them

The scientific method works best when it actively fights human habits like seeing what you hope to see. A few simple safeguards can raise the quality of almost any project.

- Confirmation bias: favoring evidence that supports your idea. Fix it with predefined criteria and by looking for ways your hypothesis could fail.

- Changing the question midstream: redefining success after results appear. Fix it by writing your question and metrics before collecting data.

- Too-small samples: conclusions from noise. Fix it by increasing sample size, repeating trials, or using power estimates when possible.

- Confusing correlation with causation: two things move together, but one may not cause the other. Fix it with controls, randomization, and alternative explanations.

- Poor measurement: inconsistent tools or unclear definitions. Fix it with calibration, standard procedures, and unit-level detail.

Practical Checks Before You Run A Test

These checks keep projects from drifting into ambiguous results. They are simple, but they protect interpretability.

- Define what “success” and “failure” mean using numbers or clear categories.

- Write the procedure as if a stranger must follow it without guessing. That is replicability in practice.

- Identify what could bias outcomes and add a countermeasure: randomization, blinding, or standardized timing.

- Plan how you will store data, including units, timestamps, and metadata.

A Short Example That Shows Every Step

Consider a clean, everyday question: Does a new insulation material reduce heat loss better than a standard one? The steps below show how the method turns this into a test that other people can repeat and trust.

- Observation: Similar containers cool at different rates depending on insulation thickness and material.

- Question: Under the same conditions, does Material A reduce heat loss more than Material B?

- Background research: Review heat transfer basics (conduction, convection, radiation) and check known R-values or thermal conductivities if available.

- Hypothesis: Material A reduces heat loss because its structure lowers effective thermal conductivity.

- Prediction: If both containers start at the same temperature, the one insulated with Material A will show a smaller temperature drop after a fixed time.

- Test design: Use identical containers, equal insulation thickness, consistent room airflow, and a calibrated thermometer. Include multiple trials to reduce randomness.

- Data collection: Record temperature at fixed intervals, plus room temperature and start temperature for each trial.

- Analysis: Plot cooling curves, compare average cooling rates, and calculate uncertainty. Look for systematic differences rather than a single lucky run.

- Interpretation: If Material A consistently slows cooling beyond uncertainty, results support the hypothesis. If not, revise the hypothesis (maybe convection dominates) or adjust the design.

A Simple Table To Keep The Steps Straight

This table summarizes what each stage should produce. It also highlights a common mistake that can quietly break the logic of a study.

| Step | Main Output | Typical Pitfall |

|---|---|---|

| Observation | Recorded pattern (notes, measurements, logs) | Relying on memory instead of records |

| Question | Testable question with defined variables | Question is too broad to measure cleanly |

| Hypothesis | Explanation that can be wrong | Writing a claim that is not falsifiable |

| Prediction | Expected outcome under conditions | Vague predictions with no numbers |

| Test Design | Procedure, controls, sample plan | Confounders left unmanaged, making results ambiguous |

| Data Collection | Dataset plus metadata (units, timing) | Inconsistent measurement or missing context |

| Analysis | Statistics, plots, uncertainty estimates | Cherry-picking results that “look good” |

| Interpretation | Claim with limits and alternatives | Overstating what the data can support |

| Communication | Methods others can reproduce | Leaving out details that make replication impossible |

Why Real Science Looks Less Linear Than Textbook Diagrams

In practice, researchers often move backward and sideways. A surprising measurement can force a new hypothesis. A new instrument can reveal a better variable to track. This is not a flaw—it is a sign of responsiveness to evidence.

Some fields also cannot rely on classic “change one variable” experiments. In astronomy or deep-earth science, researchers use observational tests, natural experiments, and models. The logic remains the same: predictions meet data, and claims survive only if they keep matching the world better than alternatives.

Science advances when methods are clear enough that other people can challenge, repeat, and improve them. That social pressure is part of the method, not an optional extra.

Tools That Strengthen Evidence Without Adding Complexity

You do not need advanced equipment to follow the scientific method, but a few habits make outcomes more trustworthy with minimal effort.

- Randomization: assign conditions by chance to reduce hidden patterns.

- Blinding: when feasible, hide which condition is which during measurement to reduce bias.

- Replication: repeat trials, and repeat them on different days or setups to test robustness.

- Predefined analysis: decide how you will analyze data before seeing it, so results are not shaped by hindsight.

- Error tracking: record calibration, measurement uncertainty, and any anomalies instead of hiding them.

Ethics And Safety Are Part Of Good Method

Strong science respects people, animals, and environments. That means following ethical review where needed, protecting privacy, minimizing harm, and documenting how risks were reduced. Even in simple projects, safety and honesty improve results because they encourage careful planning and transparent reporting.

What To Take Away When You Read Science News

When a new study makes a bold claim, the scientific method provides a calm checklist for reading it. Look for a clear question, a test that actually challenges the idea, and data strong enough to support the conclusion without stretching. If a result is surprising, the most scientific response is not instant belief or dismissal, but curiosity about replication, uncertainty, and alternative explanations.

Sources

- UC Museum of Paleontology (Understanding Science) – The Real Process of Science [Explains science as an iterative process, not a strict line of steps]

- National Institutes of Health (NIH) – Explaining How Research Works [A clear overview of why science can change as evidence improves]

- NASA Space Place – Steps In Scientific Method [A step-based explanation with concrete examples and testable hypotheses]

- NOAA NESDIS – What Is The Scientific Method? [A practical outline emphasizing evidence, errors, and verification]

- Encyclopaedia Britannica – Scientific Method [Clear definitions and key ideas like variables and controlled experiments]

FAQ

Is the scientific method always the same set of steps?

No. The core logic stays consistent—test ideas with evidence—but the workflow can change by field. Lab experiments, field studies, and computer models can all follow the method while using different tools and constraints.

What makes a hypothesis “good”?

A good hypothesis is testable and specific. It should imply predictions that could turn out wrong. If no possible result would challenge it, the hypothesis is not useful for scientific testing.

Does science ever “prove” something?

Science usually builds confidence rather than absolute proof. A claim becomes reliable when many tests, in different contexts, keep supporting it and when alternatives fail. Good science also states limits and uncertainty.

Why is replication so important?

Replication checks whether a result depends on luck, hidden bias, or a specific setup. When different groups reproduce the same outcome with similar methods, the claim gains credibility beyond one dataset.

Can the scientific method be used outside of science classes?

Yes. Any time a decision can be improved by measuring outcomes—from testing a material to evaluating a process—the method helps. A clear question, a fair comparison, and honest data analysis often outperform guesswork and anecdotes.

Article Revision History